About 3 minutes after your first customer feedback or Net Promoter® survey goes out, management will be pounding the desk for a report with charts, analysis and recommendations.

The question is, apart from a simple chart of scores, what else should be included in your best practice Net Promoter reporting pack?

In this post I’ll take you through what’s important to include and exclude to maximize impact.

- NPS Reporting Goals

- Net Promoter Score Reporting Considerations

- The Nine Critical NPS Reporting Requirements

- 1. Properly Handle Small Sample Sizes

- 2. Include Statistics to Clearly Identify "Real" Change

- 3. Track Response Rates to Reduce Score Begging

- 4. Report Monthly… at Least

- 5. Report on Score Drivers

- 6. Track Loop Closing

- 7. Include Seasonality Adjustments, If Appropriate

- 8. To Weigh or Not to Weigh Is the Question

- 9. Track NPS Related Projects

NPS Reporting Goals

Let’s start with the goals of your customer feedback report – what are you trying to achieve?

Charting the Course

Tracking the changes in scores is an important outcome. You need to know if the score is going up or down or neither so you can take action.

Importantly you need to understand the idea of margin of error and how it impacts on whether score changes are real or just an outcome of the sampling process.

Helping The Business Focus on the Right Areas

Your report is the best way to help the organization to focus on the right things. If you only report on scores, that is all anyone will care about.

So you need to ensure that you report includes all of the important elements in a balanced way.

Net Promoter Score Reporting Considerations

Sample Sizes Can Be Too Small

Reporting NPS and customer satisfaction is not the same as reporting revenue, cost or other exhaustively collected values.

You count every dollar and cent of revenue, there are no estimations. In contracts, NPS, CSAT, etc data are sampled not exhaustively collected. This difference has practical impacts on reporting because it introduces the idea of statistical error in your results.

Generally, smaller sample sizes have larger margins of error than larger samples.

While you may have a good sample size when considering all the responses, they can fall dramatically when you segment the data down to smaller and smaller groups of customers. For instance, when you segment by state, product and touch point.

In these areas you can easily find your sample size getting quite small and the sample error expanding quickly.

This can be a real issue for Business to Business organisations where the number of responses they receive is smaller because they have fewer customers.

Small samples can be compared but you need to be cautious and ensure that you calculate the error in the sample. More about this later.

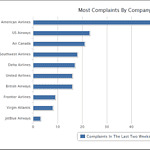

Focusing Only on the Score

People often tell me they want implement NPS® to keep an eye on their staff. For these people, the first order of business is to graph each person’s NPS and compare them to find the underperformers.

This is a mistake and only serves to drive score begging behaviour in your business. The upshot of this behaviour is, ironically, less information about the customer the more surveys you receive.

Certainly you need to track NPS and Customer Satisfaction scores but it needs to be balanced with other data and you probably don’t want to give front line staff NPS goals.

Wanting to Change Scores That Seem “Wrong”

Humans being, well, human, they don’t always do what you expect.

Sometimes they give you a NPS score of 3 and write

I really love your company, you’re great.

Or a 0 and say

I love [company x] but I make it a policy never to recommend insurance companies.

And, yes I’ve seen this exact comment.

Or a 10 and comment that:

Your local office is really terrible at calling me back.

Naturally this begs the question: how should your handle these seemingly inconsistent responses – delete them, adjust the score to what you think matches the comment or do nothing?

Here are the reasons you should do nothing:

- As soon as you start to change scores you introduce individual value judgements. How “wrong” is wrong? In the example above do you delete all three or just the middle one? Who decides? In the end you spend more and more time deciding how to adjust the data and less and less time doing something with it.

- The absolute NPS means nothing. It’s only the changes in score relative to each other that are important. So long as the audience is the same, these variations will be consistent in the long run and not affect your relative scores.

- Focus on the score at your peril: from above, the score is important but not the only thing.

Integrating Relationship and Transactions Scores

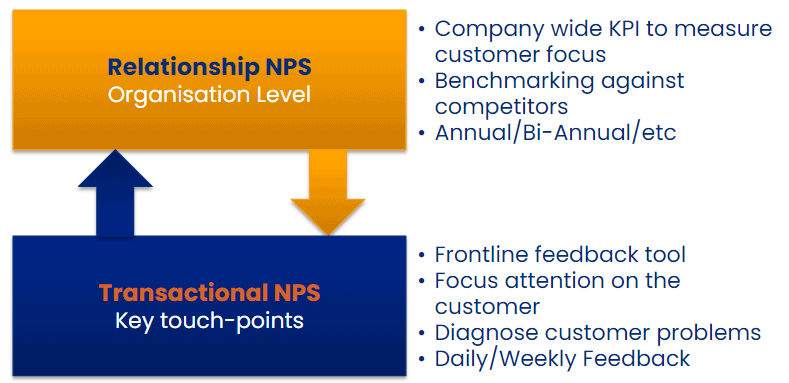

Relationship and Transactions scores are completely different and cannot meaningfully be compared.

Top down or relationship scores focus on your overall positioning against competitors; your strengths and weaknesses.

Bottom up or transactional scores focus on your business processes and continuous improvement.

The link between the two is that changes in bottom up NPS should lead (in time) to changes in Top-down NPS as improvements in the business translate longer term to improvements in overall customer perception versus your competitors.

However, you cannot meaningfully combine the scores through averaging or summing. So don’t try, just report them separately.

The Nine Critical NPS Reporting Requirements

So with some goals and issues identified we can start to build our best practice reporting process.

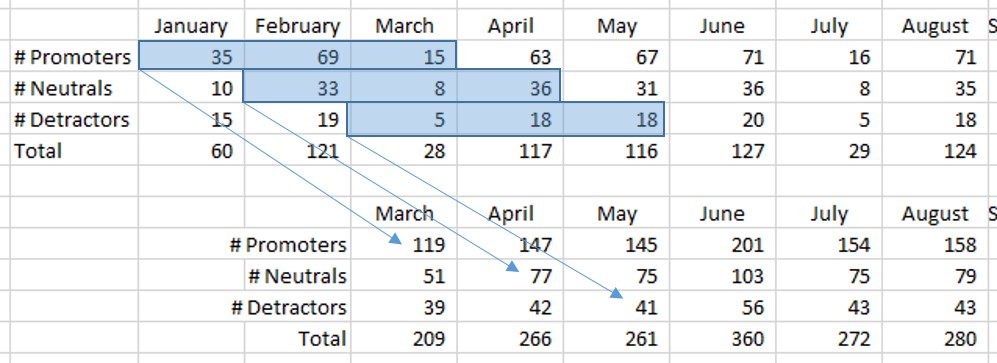

1. Properly Handle Small Sample Sizes

If you have small sample sizes (<100 responses per reporting segment) try rolling up the data to provide greater responses volumes.

For instance, instead of reporting monthly scores with 30 responses per month, try reporting “three month rolling scores”.

Using rolling scores like this can mean that it might take longer to perceive changes in the underlying business but you can be more certain that the changes are real when you seem them. You will also be less likely that you will jump to the wrong conclusions.

Or if the number of scores for a particular team too small try rolling up the score to the organisational unit at the next level.

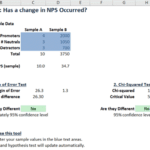

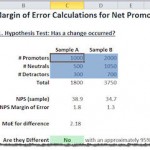

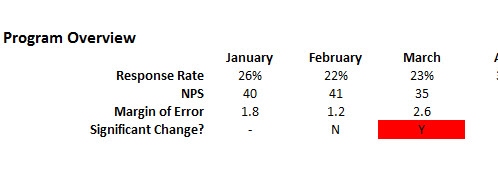

2. Include Statistics to Clearly Identify “Real” Change

Whenever you report your NPS or CSAT score you should also report some type of margin of error or perform some simple customer satisfaction statistical tests so you know if the changes in the score are likely to be significant.

This is important — you must provide the people reviewing your report with an idea of the variation in the scores so they can be confident in making decisions.

The statistics for Net Promoter Score a little more complex but there are NPS statistical tools that you can download and use.

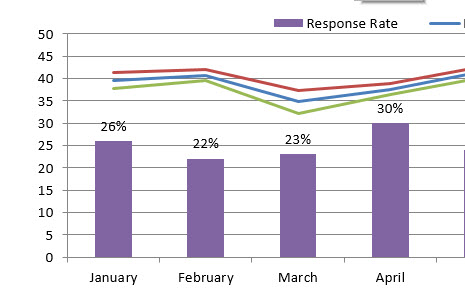

3. Track Response Rates to Reduce Score Begging

One antidote to score begging is to goal front line staff not on CSAT or NPS score but on Response Rate.

Front line staff can positively impact response rates by letting customers know they may receive a survey and how important their feedback is.

This has two benefits:

- It reduces the level of score begging

- It lifts the number of responses you receive.

So this is a win/win for the process.

4. Report Monthly… at Least

Research has shown that companies reporting their CSAT or NPS scores at least monthly have a higher success rate than those that don’t, so report at least every month.

The action is simple — ensure that you report on your program every month at a minimum.

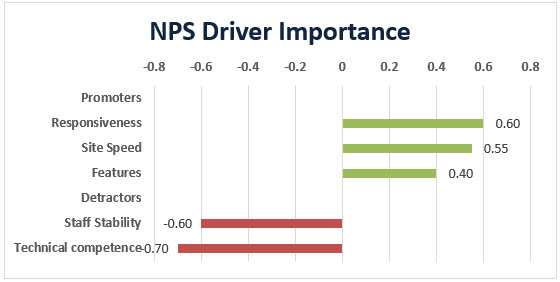

5. Report on Score Drivers

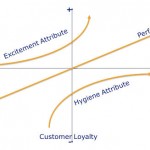

The score is interesting but far more important are the drivers of the score.

If the goal is to improve the score, and it generally is, then you also need to report on what you believe influences the score.

Note that it is generally only a small number of the product or service attributes that will drive most the score.

Partly this is an outcome of the Pareto Principle and partly it is because people just can’t keep more than a few ideas in their mind at any one time. 7 +/- 2 is the generally accepted number but even that may be a high estimate based on more recent research.

So if you are showing 15 different important drivers for the score, you probably have too many.

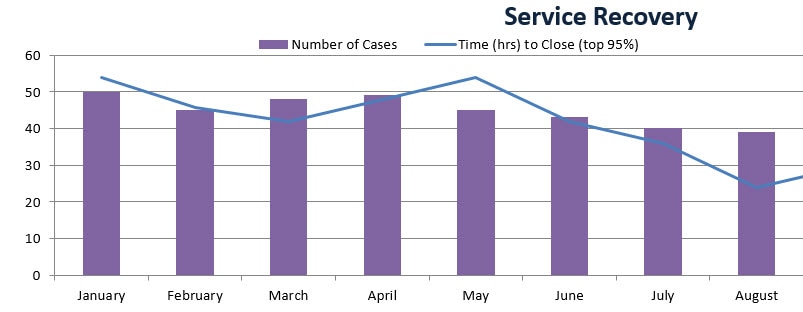

6. Track Loop Closing

One of the drivers of NPS is how quickly you close the service recovery loop. Closing the loop with unhappy customers can give you 11 points of Net Promoter by itself.

So one of the numbers you should be tracking is the number of requests closed and the speed with which they are closed.

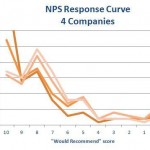

7. Include Seasonality Adjustments, If Appropriate

Many, most, NPS and CSAT scores will show some level of seasonality. That is, scores will rise and fall in line with regular occurrences in the business.

For example, if you supply software used by accountants you might expect support calls to rise dramatically near the end of the financial year when those accountants are madly trying to get everyone’s tax return done.

That will probably depress your score: partly due to stressed accountants giving lower scores and partly due to higher service loads reducing real service levels.

So check your data for seasonality. If you do notice seasonality you should consider performing some seasonality adjustments on the scores to make comparison more valid.

Of course a simple way to tackle seasonality is to simply compare scores a year apart, e.g. March last years with March this year. That way you are comparing the score at the same position in the seasonality cycle.

8. To Weigh or Not to Weigh Is the Question

Weighing data means giving more giving more value to some scores than others. For instance, giving more importance to the responses from large or frequent customers than from small or infrequent customers.

This type of weighting can be a good idea but needs to be used with caution to ensure that you weight it in a way that is in-line with your company goals.

If for instance you weight responses based on Revenue but high revenue clients also show low margins you might be working against yourself.

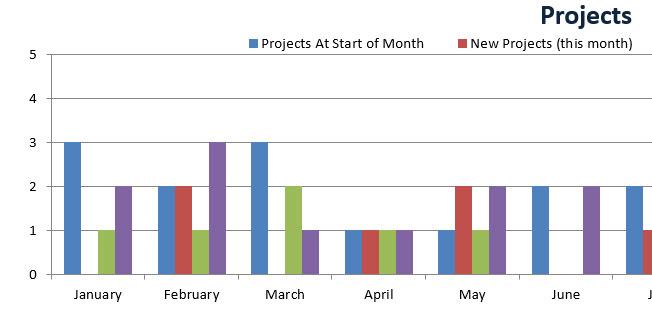

9. Track NPS Related Projects

The only reason you’re collecting this data is to improve the business. After all, you don’t make a pig fatter by weighing it.

On that basis you should be reporting the initiatives that have been uncovered from the customer feedback data, their status and success.

This is also important because it shows everyone in the organization that you are focusing on the process of improvement not the collecting of scores.

Originally Posted: 4 April 2016, last update: 16 May 2021