You’ve spent weeks working through the numbers to unpick what customers are saying. After checking through the data and analysing a range of root causes, you have created a really practical plan to solve a key customer issue.

The PowerPoint presentation you’ve created nails each of the points you want to make. It starts right up front with the bad news: Net Promoter data for the business group in question.

Before you walked in, you were fully prepared for this meeting, so how is it that 3 minutes in you’re under attack on the very first slide? A senior manager is pointing accusingly at the screen: “I don’t believe that NPS – you don’t have a big enough sample size.”

This is a problem that I am increasingly hearing from customers. When their colleagues in the company don’t like the results from a Net Promoter or customer satisfaction survey they are blaming the sample size.

Don’t like the score their department has received? Blame it on sample size. Don’t like actions that are being recommended and want to drag the anchor on making change? Blame it on sample size.

More and more people are starting to hear about sample sizes and calculations and are using their half knowledge to frustrate the process. Typically, they have just enough information to be dangerous.

For anyone that really understands sample size and the way statistics operate, this is frustrating because it mischaracterises sample sizes and making decisions based on statistical data.

We use statistics to help us make decisions and the only information we really need is enough to distinguish between alternative outcomes. Any more than that is a waste of time and effort.

Here’s Why that Senior Manager is Upset

The reason that executive is so jumpy is that he or she is probably being rewarded based on customer satisfaction or NPS targets. This is a problem.

It’s not talked about very much but one of the key implications of all this sample size and confidence interval conversation is related to personal Key Performance Indicators (KPIs).

Typically, business KPIs are set as hard numbers: $x million in revenue, $y million in costs or $z seconds of average handle time. In all of these cases, hard single numbers are fine because you have access to the whole population, i.e. you can actually count every dollar of revenue.

The finance department is going to get the boot and the IRS is going to be very unhappy if you get revenue and cost wrong by even a few dollars.

On the other hand, CSAT and Net Promoter scores can only ever be estimates. When you state that the NPS for your business or department or division is 24, what you are really saying is:

The NPS of the sample we took was 24. Based on the attributes of the response we received, we can be 95% certain that the NPS of our customer base is between 22 and 26.

That is a very different statement and one that is at the real heart of the issues that we discussed in our introduction. If people are not confident in the robustness and accuracy of the customer feedback, data collection, and reporting process, they will refuse to believe the results and look for flaws in the system. Hence the large number of sample size questions.

If you are holding people to customer feedback goals then you should probably not be doing it the same way that you give revenue goals.

Rather than single numbers, you should opt for ranges, lowest estimated limits or even simple confirmed changes. For example, these would be better NPS KPIs:

- NPS for year 22-25

- NPS less the Margin of Error > 25

- NPS improved year on year

Note that (1) above get complex because you will also need to include minimum sample size and ratios of Promoters/Neutrals/Detractors.

In some ways, example (2) and (3) are the clearest sets of KPIs.

Getting You Back on the Front Foot

So let’s get back to basics and agree on what we are typically trying to achieve in a customer feedback context. I recently wrote about The only statistical analyses you need to use on customer feedback data and explored why you are only looking to perform two sorts of analyses on customer feedback data.

-Is the Score Different?

-Does Changing this Cause That to Change?

Most of these sample size questions are tied up in the first type of analysis. You are trying to determine if an average of a score has changed from one sample to the next.

Let’s examine what that means for sample size.

It is not generally well understood but there is no minimum sample size required for any particular set of statistical analysis. Rules of thumb such as needing a minimum of 100 responses are relevant to providing a “reasonable” level of confidence for a worst case scenario. They are not relevant to looking at a specific situation.

As an example, say you have this question in your customer survey:

How responsive is our sales staff, where 1 is unresponsive and 7 is very responsive?

One of the things you will want to know is has the score changed from the last survey. So what sample size do you need to check if a change has occurred?

Sorry, but we have to dive into some maths for a few seconds to make this point.

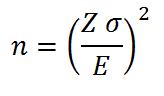

The equation for that sample size is:

Where

- n is the minimum required sample size

- Z is related to how confident you want to be in the answer, normally expressed as 90% or 95%

- σ is related to how much inherent variation there is in the population: does almost everyone give you a 5 or 6, or do you get a wide even range of scores all the way from 1’s up to 7’s.

- E is the margin of error or size of the change you want to detect

Don’t worry too much about the details of the math, but what this says is that sample size (n) is not one number but a range of numbers. It changes with changes in the assumptions you make and the attributes of your customers:

- n gets larger the more confident you want to be that you have detected a real change

- n gets larger the more variation you have in your sample

- n gets larger the smaller the change you want to detect.

So if “responsiveness” has gone up a lot since the last survey you will need a relatively small sample to identify the change. Alternatively, if the change has been small, you will need a bigger sample.

(Readers worried about using ordinal data in this way please read this)

There is no one right sample size. There is only the sample size that is able to detect what you want to detect.

You do need some statistics to demonstrate your position but they don’t have to be difficult. You could start with an easy Excel spreadsheet and a t-Test.

An Extreme Case: 5 Data Points May Be Enough

Let’s goes even further with the idea that sample size is not all it’s cracked up to be.

What if you had only five data points? Could you make any useful prediction with so few data points? The executive in your meeting would probably say no, but they’d be wrong.

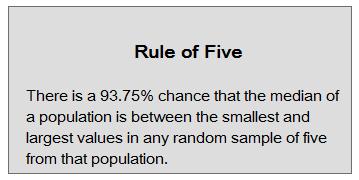

Douglas Hubbard discusses the Rule of Five in his book How to Measure Anything.

Note that we are talking about median not mean (or average) here so it’s a bit different but the idea is still the same. With just 5 data points you can make some pretty strong statements about the underlying population.

If you take a sample of 5 customers and they each score your “responsiveness” between 4 and 6 on a 7-point scale, you can be pretty sure that the median score of all customers will be between 4 and 6.

The point being that you might well be able to make important decisions based on that limited amount of data.

Net Promoter is a Special Case

However, when you start to consider this idea of sample size you need to be aware that Net Promoter is a special case. The sample size calculators that you will find on the internet simple don’t apply because the statistics is different for NPS.

There are a tables provides by vendors in the Net Promoter consulting business (you can Google them) but they overestimate the sample size that you need because they only consider the worst case scenario.

Based on those tables, if, for instance, you had 112 responses, you might think that the sample size was only going to detect changes of +/- 11 points of NPS.

That is the worst case but if those responses looked like this, you could be confident in detecting changes of +/-5 points – more than twice as effective.

- Number of Promoters: 86

- Number of Neutrals: 22

- Number of Detractors: 4

In this case, it is the large proportion of Promoters and the small proportion of Detractors that make the sample size more efficient.

Download this free Net Promoter specific calculator for the backup stats you need for that executive: Net Promoter® Comparison Tester

Getting Past Slide 1

So now, before you present, would be a good time to back to your presentation and think through the sample size question.

You should be prepared to discuss sample size and have the statistics to back up your position.

But, you should also have some sympathy for the senior manager and his or her not-so-perfect customer satisfaction goal.