Iron Mountain doubled NPS survey response rates with two simple changes to their survey invite. In doing so they increased internal confidence in their analysis and internal engagement with the feedback process overall.

First, they changed the email invite “from” name to a real person, not a department.

Second, they radically slimmed down the wording in the invite and embedded the first question in the invite itself.

These were great outcomes for their Net Promoter program, so we sat down with two of Iron Mountain’s Australian customer experience managers to discover exactly what they did.

Thank you David Schekoske and Bernice McLeod.

Net Promoter Score at Iron Mountain

Iron Mountain offers more than 65 years of experience in successfully delivering value-added document and media tape management services, relocation, archive, secure destruction, scanning, and digital workflow solutions.

As a worldwide industry leader, Iron Mountain supports businesses to meet their challenges associated with Information Management, Digital Transformation, Secure Storage and Secure Destruction, with smart, strategic solutions and proven expertise.

The Australian Iron Mountain group rolled out the Net PromoterⓇ process in 2014. It has been listening keenly to its customers and acting on that data to improve its business ever since.

Genroe and CustomerGauge have worked with Iron Mountain to support them in that process.

Wanting to gather even more insights from their customers, the company investigated ways to radically increase the number of survey responses they received.

The Need For More Responses

Wanting, and needing, more responses is an almost universal desire in customer feedback programs.

At Iron Mountain the genesis for driving greater numbers of responses was two-fold.

1. Increase Confidence in Data

Smaller volumes of responses mean organisations are less certain of the results, and therefore, of investing in change.

By lifting the number of responses Iron Mountain was seeking to drive more confidence in the results and recommendations derived from those data.

2. Drive Staff Engagement in Customer Feedback

More confidence in the data coupled with wider dissemination drives a higher level of internal engagement with customers and how their business is performing.

We found [it] has really helped with internal engagement …. We have a good trend in the charts, so we can see the sensitive customers who are providing positive feedback on the delivery-collection experience versus negative feedback: just on that one customer touchpoint. And we can feed that information out to the business internally. The leaders of those departments can see if there’s an improvement over time, or if we’re going backwards.

David Schekoske

Approach

They settled on two separate approaches;

- Improve data quality to create a larger pool of invitees

- Improve the survey invite so more invitees complete the survey request

Steps

1. Increase the number of valid invites

Iron Mountain run two separate NPS surveys: Transactional and Relationship

The transactional survey relies on frontline staff collecting valid email addresses for relevant interactions. So the first change was to goal frontline staff on the number of email addresses they collect during relevant transactions.

Note they are not goaled on the NPSⓇ itself.

Staff were given KPIs to achieve and the data was reviewed to ensure accuracy.

The focus on complete data also reduced the self-filtering that can sometimes go on in frontline situations where unhappy customers are not invited to respond. This can have the effect that the score collected might not be a true reflection of the organisation position.

Focus KPIs on complete data to reduce the self-filtering that can happen where unhappy customers are not invited to respond

This is a perfect approach because it means you improve your data quality at exactly the time you need it most. It shows staff that you care about the data collected and is an important driver of cultural change.

2. Tested an Incentive

After lifting the number of valid invites Iron Mountain focused on lifting the response rate.

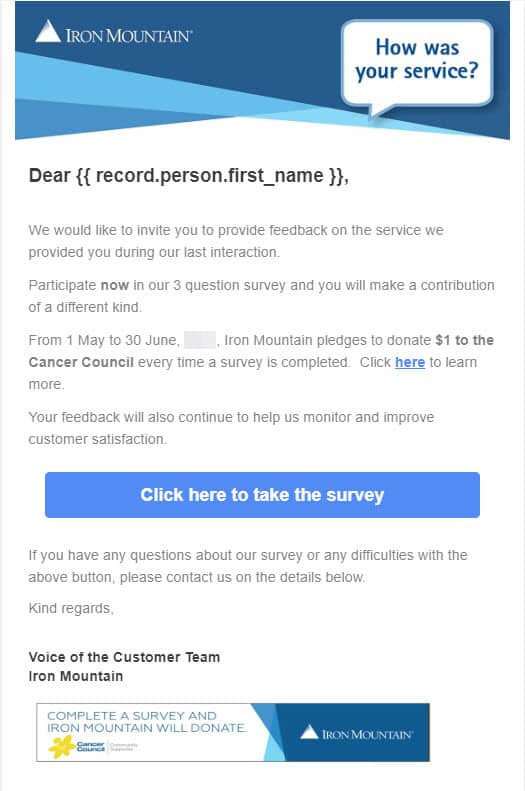

First they tried an incentive for completion which can work well. In this case Iron Mountain used a “donation to charity” approach, which was also tied into their overall corporate giving values.

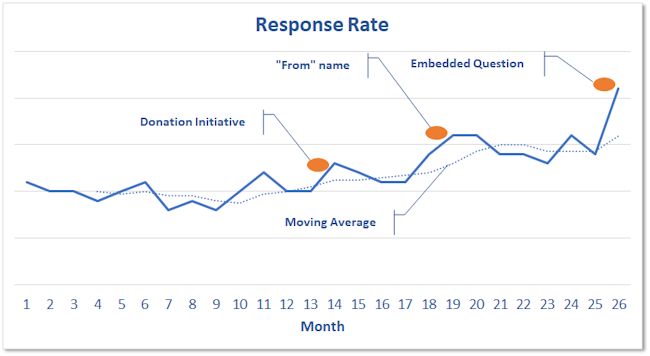

The incentive lifted response rates by around 30% but, for reasons unrelated to the survey, was discontinued after one month and rates returned to normal.

3. Changed Sent name to a real person

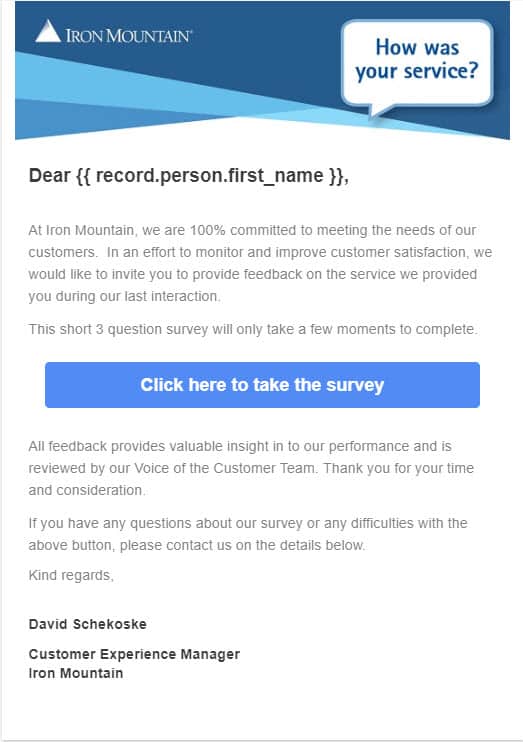

The second, very simple, change was to the “From” name for the survey. This was changed from a departmental name to the name of the person heading up the customer feedback team.

This is an exceedingly simple change and netted a 50% lift in response rates.

Often there is a fear that the person at the bottom of the email will receive high levels of direct contact from customers and this acts as a disincentive to try this approach. This was a concern at Iron Mountain as well

I was expecting that [when my name was used] …but in the year that we’ve done it, I’ve received two random emails from customers. In the end, it was based on thousands of emails that we sent out.

David Schekoske

4. Updated the Invite: fewer words + embedded survey

After reviewing the invite data they confirmed a relatively high number of invite opens, but a low number of survey completes. From this they reasoned that getting the invite opened wasn’t the problem, getting it completed was the bigger issue.

They’re at least opening it. … if that opening percentage wasn’t high, then we were going to change the subject header … but we then decided to just go straight to the email content .

David Schekoske

They reasoned that today, most people know about surveys and feedback so they didn’t need lots of explanatory text in the invite.

People already know why companies are doing it these days, so we just thought the less words, the better because if the customer just wants to do the survey, they’ll do it, they don’t really read it.

David Schekoske

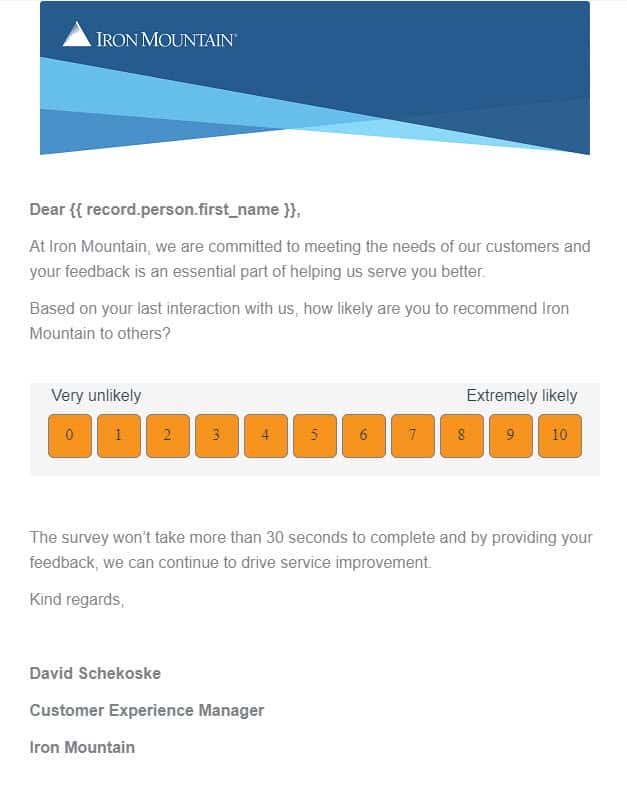

They also decided to embed the first question of the survey directly into the email invite. This is partly to demonstrate how simple and quick the survey is to complete.

Embedding the first question of the survey in the invite has been shown to lift response rates pretty dramatically and this was no exception. Rates jumped another 50% on this change.

In all honesty, this change is a little technically difficult. Creating an email invite that looks good in all, or at least most, email clients means the coding of the invite is a lot more sophisticated than a simple link in the mail. However, the results are worth the one-time effort.

Summary of Results

You can see the impact of each of the different interventions in the chart below.

Over a period of 12 or so months, Iron Mountain has succeeded in lifting their response rates by over 100%.

This has been done with a few, moderately simple, changes to their invite.

In doing so they have increased overall business confidence in the data and driven engagement with both customers and staff.

I’d like to thank David Schekoske and Bernice McLeod for their generosity in sharing their results so other organisations can improve their customer feedback processes.