Customer surveys come in many shapes and sizes but customer feedback question mistakes tend to be the same and they repeat time and time again.

These mistakes reduce the effectiveness of the feedback survey, waste your time and the customer’s.

They are not difficult to correct, so here is how to identify and correct them in your survey

The Top Customer Survey Question Mistakes

- Making Questions Mandatory

- No “N/a” or “No Opinion” option

- Asking What You Already Know

- Asking Too Much/Private/Sensitive Too Soon

- Too Many Text Responses

- Yes/No Questions

- The 30th Question

- Answers Don’t Match Questions

- Questions Your Customer Can’t Answer Accurately

- Prioritising

- Two Ideas in the Same Question

1. Making Questions Mandatory

I’ve written before about why you should not make your customer feedback questions mandatory and it remains one of the biggest feedback question mistakes.

It is almost never a good idea to make a question mandatory. It is your privilege to have the customer invest time to provide feedback. You should not abuse that privilege by enforcing a response.

Even if your organisation is putting up an incentive (another not great idea) your customer does not owe you a response. Even if your feel that some respondents would abuse the incentive by not providing feedback just to get a chance at the incentive.

These are not reasons to make questions mandatory. If you offer an incentive you have to assume some small level of abuse.

So while it may seem like a good idea at the time, it almost never is because:

- It is disrespectful to your customers

- It generates bad data: respondents will submit an incorrect or inaccurate response

- It reduces response rates: respondents will bail out of the survey rather than answer

- Written properly, your questions will get answered anyway

So just turn off the “must answer” box in your survey software.

2. No “N/a” or “No Opinion” option

If you do choose to have mandatory questions, not having an “N/a” or “No Opinion” option causes even more problems because if a person really does not want to answer the question you have given them no way out except to provide false feedback.

They will give any response to move forward.

Now you are receiving data but you have no idea if it is real or false. You’ll have all the questions filled in but all the data is suspect. A lousy outcome.

3. Asking What You Already Know

Not too long ago I received an email invite for a survey from a large organisation. Unbelievably, the very first question in the survey was:

What is your email address?

This is simple disrespect for your customer. You are not respecting the time they are investing in responding and you are introducing error in the response you receive.

If at all possible, do not ask customers for information that they expect you to already have in your systems. It will make for a shorter survey and better responses.

4. Asking Too Much/Private/Sensitive Too Soon

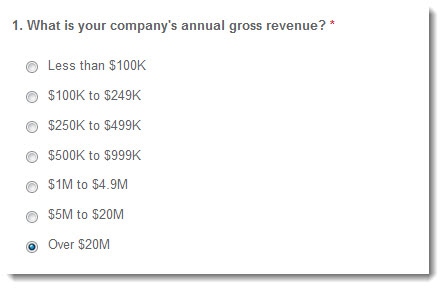

This was the first question in a real survey I received:

Hey, how about buying me a drink and letting me get comfortable before you dive right into the heavy stuff!

For many privately owned businesses, asking about revenue is quite high on the “Hey! I’d rather not tell you that!” scale.

Did you notice the other big problem with this question?

It is mandatory (see the red *) and there is no “I’d Rather Not Say” response. That means that if you don’t want to answer the question you will either give erroneous information or bail out of the survey at this point.

So don’t ask anything too sensitive in the first few questions. Let the respondent get to know the process and the survey a little bit before asking anything too personal.

Ask some easy questions to get things moving. Later in this survey, they ask about social network preferences. That would be a much better and less confrontational way to start the survey.

5. Too Many Text Responses

Selecting a tick box from a list or giving a response on a simple scale (unimportant to very important) are relatively low-mental-effort tasks. On the other hand, providing a written response to a question is a high-effort task.

You need to collect text-based (free format) information to gather greater insights into how exactly you need to improve your business. But, they take a substantial mental toll on the respondent, which means that you need to be careful not to include too many of them in your customer feedback survey.

Typically, you should have only one or two free-text questions in your customer feedback survey. More than that will lower the response rate.

6. Yes/No Questions

I’m sure you’ve seen this question before:

Are you satisfied with our customer service? Yes/No

This type of question is difficult for the customer to answer and provides little information to the business.

It is difficult for the customer to answer because most of the time they will not be completely satisfied or dissatisfied so they will not be happy with the response they have to provide.

This question also provides you with very little nuanced information on what the customer thinks: just a simple yes or no.

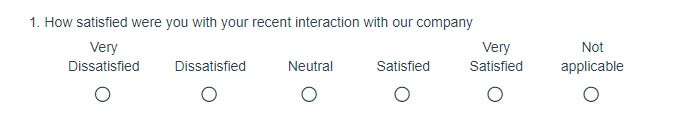

A much more effective way to design the questions is:

Now the customer can provide an answer that meets their opinion and you will get a much more nuanced view of what they think.

7. The 30th Question

By this I just mean surveys that are getting too long. Making the survey longer simply reduces the response rate, increases the load on customers and reduces the accuracy.

Typically you can collect all the information you need in a customer feedback survey in no more than 10 questions.

If your survey has 20, 30 or even (and I’ve seen them) 50 pages of questions, you should radically redesign it.

Give yourself a 10-questions limit and get to work.

8. Answers Don’t Match Questions

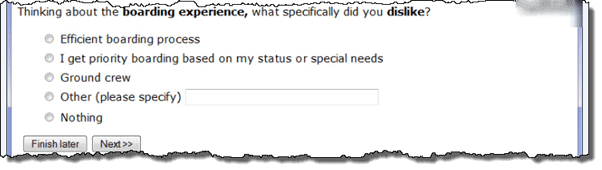

Check out this survey question I received from a major airline:

The responses, mostly, don’t match the questions:

What specifically did you dislike about the boarding experience? – The efficient boarding process.

What specifically did you dislike about the boarding experience? – I get priority boarding based on my status or special needs.

In fact, the first two responses are simple tautologies and add no information. Reworded more clearly, they simply say:

What specifically did you dislike about the boarding experience?– the boarding process.

What specifically did you dislike about the boarding experience? – the priority boarding process.

Responses that would have been more useful could have been:

- The cleanliness of the lounge.

- The clarity of the public address announcements.

- People queuing before their seat row is called and clogging up the aisle for everyone else.

Okay so that last one is a pet peeve of mine, but you get the picture.

Read your responses back, or better yet, have a colleague/sister/uncle do it. Make sure the questions and the answers match before you send out the invites.

9. Questions Your Customer Can’t Answer Accurately

There are lots of pieces of information that I would know but customers simply have no way of providing accurate responses.

This is not because they don’t want to respond or even don’t think they know the answer but because they simply can’t give an accurate answer.

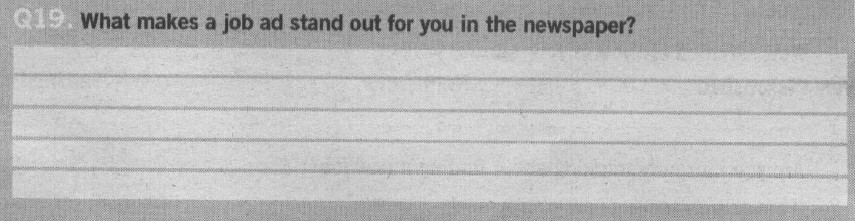

For instance have a look at this question from a newspaper feedback survey. In it, they are trying to determine how to make advertisements stand out more.

The trouble is that while everyone might have an opinion of what makes an advertisement stand out, it is probably not what really makes it stand out. Humans are not very good at determining the real answers to these types of questions.

A much better way to answer this question would be for the newspaper to run tests of different layouts to see which one works best.

Another great example of this problem comes from supermarkets. When shoppers are asked how often they purchase house brand products their response is very different to what you find when you analyse actual shopping basket contents.

Real shopping baskets contain many more house branded products than survey responses would indicate.

This doesn’t imply that respondents are lying but it does show that people are not very good at reliably tracking their actions in the past, or predicting their actions in the future.

Review your survey and make sure that you are asking questions that your respondent is able to answer in a meaningful way.

10. Prioritising

Please prioritize the following features from most important to least important:

Feature 1

Feature 2

Feature 3

…

Feature 10

Feature 11

Feature 12

There are two mistakes in this question:

- Prioritizing 12 items is a very difficult thing to do: basically the task is just too hard

- Respondents don’t know the answer: this is similar to the newspaper mistake that I mentioned earlier.

While it would be nice to have your respondents order what is important to them using this type of question, it’s not the best way to do it. When you have lists larger than five items, it becomes too demanding for the brain to order them in any meaningful way.

If you do have a question like this, ensure that you have no more than five elements in the list.

11. Two Ideas in the Same Question

How accurate and clear was our loan documentation?

This is impossible to answer for your customer and impossible for you to analysis because it has two separate ideas in the same question.

Often this seems like a good way to reduce the number of questions in the survey but it causes more problems than it solves.

If the customer is happy with accuracy but unhappy with the clarity of the document, or vice versa, they are stuck. They can’t answer the question. In practice, they can only answer one of the two questions you have posed.

Similarly, when you receive the results, you will be stumped on how to analyse them because you will not know what question they have answered.

So, if you see two ideas in a question, it’s best to simply place them into two separate questions:

How clear was our loan documentation?

How accurate was our loan documentation?