There are plenty of bad customer satisfaction surveys and plenty more bad customer satisfaction survey questions but today were looking at the worst mistakes we see made when reviewing customer surveys and customer survey questions.

They aren’t just bad for the respondents, they’re also bad for the asking organisation because they data they collect is wrong and/or misleading. Something you often only discover when you go to analyse the data.

In this post I’ll review the worst types of customer survey questions and how to avoid making the same mistakes in your surveys.

- Asking Questions To Which You Already Know the Answer

- Biased Questions

- Too Many Questions

- Ambiguous Questions

- Mandatory Questions with no "Not Applicable" Option

- Questions and Answers That Don't Match

- Questions To Which Your Respondent Can't Know the Answer

- Asking Respondents How They Will Act / What is Important

- Doubled Barrelled Survey Questions

Asking Questions To Which You Already Know the Answer

It sounds silly, but asking questions that you already know the answer to is a surprisingly common feature of poorly designed survey questions.

Every survey has a limited number of questions you can ask before the respondents just quit. To maximise the number of information adding questions you must eliminate every question that collects information you already know.

The invite for this example question, from a tier one airline, was a well laid out email with my name, frequent flyer status and current points balance. Clearly they know who I am, so why is the first thing they ask:

What is your email address?

This is only made worse in the following 14 questions that ask basic background information they also already know. If they know my frequent flyer status then they know all the flights I’ve taken with them for years – so why are they asking me for data they already have?

It’s a waste of valuable questions.

Asking questions when you already know the answers does not respect the respondent’s time. Fully 25% of the total questions in this survey are simple demographic or other information which the airline already has.

Key Takeaway: Respect your respondent’s time and maximise valuable data collection by asking only what you cannot determine internally.

Biased Questions

When asking your question, ensure it is worded in a neutral manner and not a Biased Question.

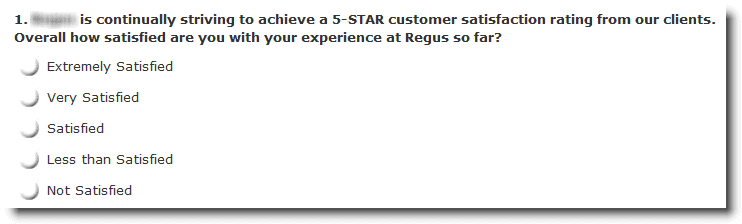

In this case, the question has been worded in a way that may change the way the respondent will answer.

By prefacing the question (“Overall how satisfied…”) with the pejorative statement “… is continually striving to achieve 5-STAR customer satisfaction…” the company has subtly altered the respondents feeling towards their experience.

Typically this is done by organisation trying to maximise some feedback score but it’s a mistake because the respondent could go either way:

- Reduce the score they were going to give: Some people will read the question and their expectations will rise, depressing the score they give: “well they were going for 5-star and that was only 3 star.”

- Increase the score they were going to give: other people will subtly increase their score because they don’t want to disappoint the company by marking them down.

The problem is that you will never really know the affect.

The real pity is that you can just eliminate the first sentence and the question still makes sense.

Key Takeaway: Review your questions and strip all biased wording from them.

Too Many Questions

This Club Med survey very kindly gave me a progress meter so I knew how many more questions I was going be to asked.

The problem was that on page one it says 1% progress! That means I have 100 pages of questions yet to get through?!?

Feeling somewhat squeamish about how long it was going to take I started clicking Next buttons. Overall there were more than 25 pages in the survey with each page having several, and more, individual questions.

There were probably around 100 questions in this feedback survey asking about every aspect of the location from the reception to the meals, and each of the activities. This is too much, way too much, for a feedback survey.

But now back to that tier one airline survey: that survey was 58 pages long with an incredible 75 questions.

Who in their right mind would actually get to the end of this colossal document, except odd people like myself, is beyond me. My guess is that they know it’s way too long and accept a low complete rate but then use the data from partial completes in their analysis. (By the way, if the airline in question is reading this they should probably toss out my response as an outlier. I can’t say I was too sincere in my responses.)

Their thinking is probably: just keep asking questions in the expectation that some people will respond to the whole survey even if most don’t.

This, I think, is disrespectful to the respondents. They are not just numbers on a spreadsheet. They are people who have invested their time in order to help you, for no intrinsic or extrinsic reward. The least you can do is be respectful of their time.

Alternatively they could develop 3 or 4 or 5 sub-versions of this survey. They have a large enough sample size to split their respondents into smaller groups and just ask a portion of the survey to a random sampling of respondents. That way they would get all of the questions asked not just the first few percent.

Key Takeaway: Look again at your survey – can you remove any questions? (Go on, you know you can.)

Ambiguous Questions

Several of the questions in that tier one airline survey are ambiguous.

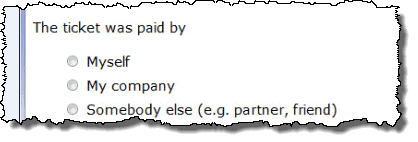

Does the question below relate to who is liable for the cost or who made the actual payment? They are two very different ideas. For instance, I may pay for the ticket on my credit card but my company may reimburse me for the expense. Which do I chose?

Ambiguous questions simply lead to ambiguous answers and with ambiguous answers you don’t have reliable data.

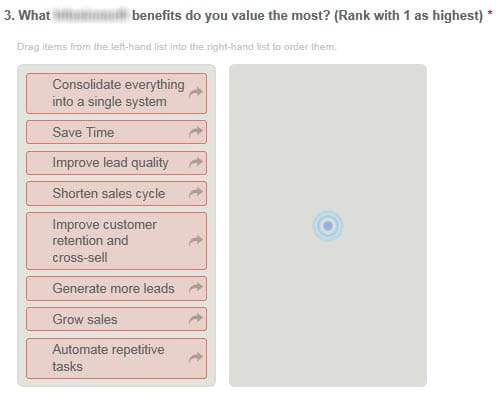

Here’s another example. I understood the question below, it’s just that the response options were not mutually exclusive and it’s impossible to know how to respond.

For instance “Automate repetitive tasks” is the same as “Save Time”. Should I choose “Grow sales” or “Generate more leads” they are, in many ways, the same. This looks a lot like not enough time was spent in question design and testing.

Key Takeaway: Ensure your questions are unambiguous.

Mandatory Questions with no “Not Applicable” Option

Respondents are giving of their time and effort to provide information. You are being selfish and fooling yourself by requiring them to respond to all questions.

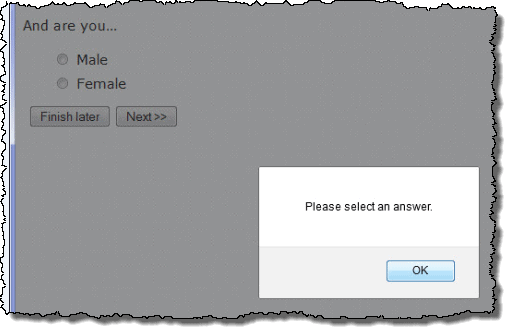

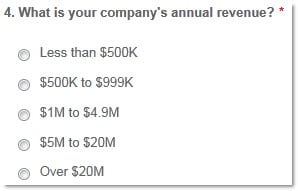

This is especially the case where people might not want to respond to a question. This issue is especially bad when combined with not providing a “Not Applicable” response. Take this question for example:

Apart from being insensitive to the entire LGBTQI community, how many women not wanting to respond to this question either quit the survey or enter “Male”, and visa versa. We’ll never know.

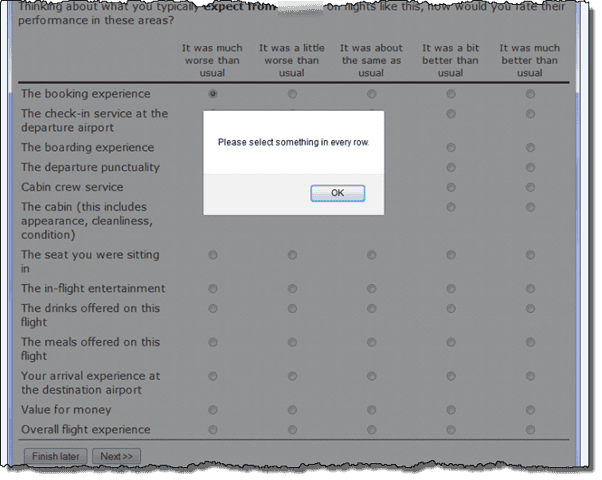

Here is an even worse example:

Don’t have an opinion on any of these items, didn’t notice what happened? Too bad you have to provide a response.

You can guess that many of these responses are trash data. But there is no way for the company doing the survey to reliably distinguish between

“I just don’t want to answer so I’ll put anything” and “It was about the same as usual.”

And another example from a survey sent to small businesses. Most of the time the respondent will know the answer to this question but what if they just don’t want to answer. No choice but to put a bogus entry here.

In this case the vendor included an incentive to fill out the survey, so perhaps they felt their customers owed them a response to every question. Your customer never owes you a response.

If you’re going to put an incentive out there, you just have to expect that some people will game the system. Making every question mandatory may prevent the 3% from gaming the system but you will annoy the 97% who are not and destroy the value of the data you collect.

In practice there are very very few times a question needs to be mandatory. If you find yourself ticking that box in your survey software – think hard: is this really necessary, will it mess up my data?

Key Takeaway: Make no question compulsory unless there is a very, very good reason.

Key Takeaway: Always provide a Not Applicable or similar response.

Questions and Answers That Don’t Match

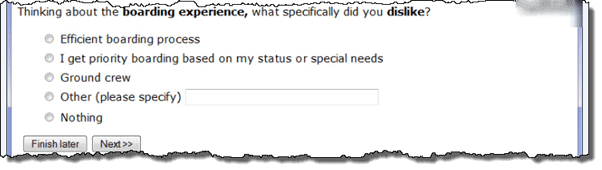

Again, this seems obvious but it happens all the time. Consider this question:

The responses, mostly, don’t match the questions:

What specifically did you dislike about the boarding experience? – the efficient boarding process.

What specifically did you dislike about the boarding experience? – I get priority boarding based on my status or special needs.

Even worse, the first two responses are simple tautologies and add no information. Reworded more clearly they simply say:

What specifically did you dislike about the boarding experience ?– the boarding process.

What specifically did you dislike about the boarding experience? – the priority boarding process.

Responses that would have been more useful could have been:

- The cleanliness of the lounge

- The clarity of the public address announcements.

- People queuing before their seat row is called and clogging up the aisle for everyone else.

Okay so that last one is a pet peeve of mine but you get the picture.

While, I know exactly how that got to this point: an earlier question asked areas “I disliked” and then piped the data to a branch of the survey, that just means they didn’t test the survey well enough.

Key Takeaway: Double check, and then check again, to make sure the answers you provide match the questions.

Questions To Which Your Respondent Can’t Know the Answer

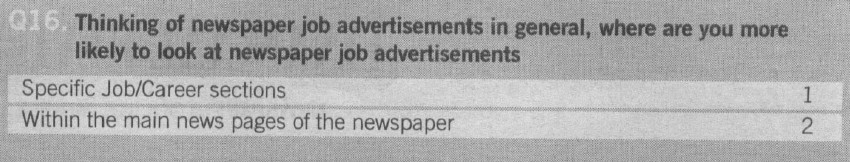

The paper edition of the Australian Financial Review, a prestigious national newspaper in Australia, similar in focus to the Wall Street Journal or The Financial Times in the UK, ran a long customer feedback survey gathering information on a variety of customer needs.

I assume this is to provide feedback to advertisers and themselves. Most of the survey was pretty straight forward but some of the questions were not helpful.

Here they are clearly trying to better understand where consumers look when searching for a job advertisement.

The problem is even if the consumer has a preference as to where they look, they are probably wrong. Humans are notoriously poor at providing objective insights into how they make decisions.

A better approach to answering the question, “where should we place career advertisement”, would be to run a simple physical test.

Place the same advertisement in different places around the newspaper and see how many calls it produces. I’m sure the newspaper could find an advertiser willing to offer of their advert for free testing.

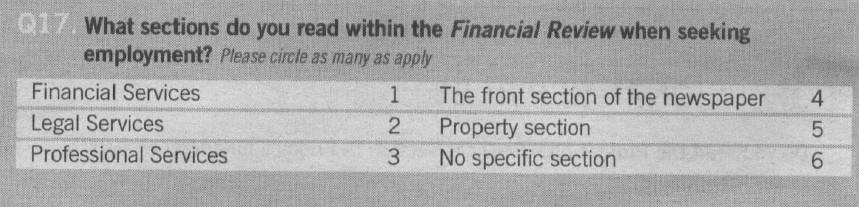

This question has similar issues.

What people think makes an advertisement stand out and what actually makes an advertisement stand out may or may not be the same.

Rather than ask, test. Try the same content in different configurations to see what is most important.

Asking Respondents How They Will Act / What is Important

Picking up on the “Humans are notoriously poor at providing objective insights…” theme we have questions asking respondents how they will act or what is important.

Asking customers how they will act is unlikely to give you an accurate answer. Not because they will not tell you the truth but because they are unlikely to act in the same way, in practice, that they say they will act, in theory.

For instance, we’ve seen loyalty program research that, when asking customers if they want points or discounts, found they overwhelmingly want discounts. Then those same customers go on to collect points, respond to points promotions, etc.

And there was a set of supermarket research that asked customers if they would buy “store brands”. When you compared actual purchase data with customer responses, many customers with baskets full of store brand product said they would never lower their standards.

If you naively believed what customers told you in either of these circumstances you could easily build the completely wrong customer experience.

Consumer research is fraught with this kind of problem and when designing your research approach you need to act defensively to guard against getting incorrect or inaccurate results. If you don’t, you will get a chart that looks pretty in the report but the insights will be just plain wrong.

Another example of this type of not believing what customers say is the “how important is feature x” type of question.

Understanding how important a product or service feature is to the customer is critical in designing good service processes. So questionnaires are often used to determine which features are most important.

The problem comes if the surveys are poorly implemented. You’ve probably seen the bad ones; the wording looks a little like this (paraphrasing):

Q1: How good is our price?

Q2: How important is price?

Q3: How good is our service responsiveness?

Q4: How important is responsiveness?

Q5: How good is our feature x?

Q6: How important is feature x?

Even before I see the results I know what the answers will be. Everything is important: 9s or 10s out of 10.

So what do you know now? Nothing more than you knew before because everything is important. You’re back to square 1.

Even if the results are not all 9s and 10s they will be skewed by what customers want you to think. For instance, no customer is going to tell you that price is unimportant, lest you decide to put up your price.

There are at least two better approaches than this:

- Forced ranking: In this approach you force the customer to rank the importance of each feature using a points approach, ranking or a best-worst approach.

- Inferred importance: If you design the survey in the right way you can infer what is important based on the answers you get from other questions or actual customer behaviour. This takes a bit of additional analysis but is well worth the extra effort.

Both of these alternatives will deliver a much more accurate outcome.

Doubled Barrelled Survey Questions

Double-barrelled questions are ones that ask about two things in the same question. For example:

Please rate the speed and accuracy of our response to your support question.

You can see there are two ideas, “speed” and “accuracy”.

The problem is that the customer can’t respond to both ideas in the one question. So they will either respond to the “speed” question or they’ll respond to the “accuracy” question.

This is similar to the mandatory questions above: you never know which question the customer has answered. So you never really know what changes you need to make in your business.