In the last 20 years, three customer experience metrics have competed to be the one most popular and recognised as predicting customer loyalty: Net Promoter Score (NPS), Customer Satisfaction and Customer Effort Score (CES).

While customer satisfaction is the oldest it is not necessarily the best. Both NPS and CES provide customer experience and loyalty insights that CSAT cannot.

I this post I’ll draw on my work with dozens of organisations to highlight the differences between NPS and CSAT to help you make a decision on which is best for your business.

What is NPS and CSAT Used For?

Before suggesting which metric is better we need to understand why a company would use them. There is just one reason to use NPS or CSAT: to predict customer loyalty.

This is an important ability for any company to have. If you are able to predict customer loyalty for an individual customer, it allows you to improve your business in the following ways:

- You can identify customers who might go to your competitors so you can intervene and stop them

- You can understand what customers want in your service and product so you can improve them

- You can identify gaps in your service delivery and product quality so you correct them.

Introduction to Customer Satisfaction

Customer satisfaction was the first commonly used customer survey question designed to predict customer loyalty. From the early 1980’s, this question was used by companies to try to identify loyal and not so loyal customers.

Organisations typically report customer satisfaction as an average of all the response scores – allocating 1 to a very dissatisfied response and and a 5 to very satisfied response.

The problem with customer satisfaction is that it is not consistently related to customer loyalty. This fact was widely publicised in a 1995 Harvard Business Review article titled Why Satisfied Customers Defect

In the chart below you can see that depending on industry and product, the relationship between customer satisfaction and customer loyalty changes.

In practice, this varying relationship between satisfaction and loyalty makes it difficult to use customer satisfaction to predict customer loyalty in the real world.

Introduction To Net Promoter Score

NPS was first widely presented in another Harvard Business Review article titled: The One Number You Need to Grow in December 2003.

Unhappy with the existing customer loyalty prediction survey questions, particularly customer satisfaction, the author, Fred Reichheld, designed a study to find a better question. The study asked tens of thousands of customers lots of different questions and then cross referenced their answers to loyalty using actual purchasing data.

The result was the Net Promoter Score. This question was most often the best predictor of customer loyalty behaviour.

With it’s demonstrated customer loyalty prediction effectiveness, companies began to use NPS in their businesses.

See this post for a more comprehensive introduction to Net Promoter Score.

Net Promoter Score Vs Customer Satisfaction

Both Net Promoter Score and Customer Satisfaction are customer survey questions which were designed as ways for organisations to predict the customer loyalty of individual customer respondents.

While average customer satisfaction has been shown to be a poor predictor of loyalty, Net Promoter Score has been shown to be an effective predictor of customer loyalty.

NPS Vs CSAT Case Study

So that’s the theory, lets look at a case study to understand how these two measure differ in practice.

A few years ago we performed some Net Promoter Score work for a successful Australian health fund.

The survey process was a best practice transactional Net Promoter Score approach where they contacted the customer shortly after each touch point interaction and performed a short email survey.

What is unique about the survey data is that they asked both the “customer satisfaction” question (with comments) and the “would you recommend” (NPS) score (with comments).

The data set contained a large volume of free format comment data and we helped them to identify the comment themes for their data and then code the comments by the themes. They were provided actual customer loyalty data for us to integrate into the analysis.

This leads to rather good opportunity to compare the effectiveness of customer satisfaction vs. Net Promoter Score.

NPS Vs CSAT As a Customer Loyalty Predictor

In summary we found that:

- A one point increase in “Would Recommend” score results in a decreased of risk of termination by 7.8%

- A one point increase in “Customer Satisfaction” score results in a decrease of risk of termination by 2.9%

This means that as a predictor of customer attrition the standard “Would recommend” question is 2.7 times as sensitive as customer satisfaction.

We also found that:

- The risk of attrition for Detractor respondents is 1.5 times that of Promoter respondents

NPS Vs CSAT Comment Insights

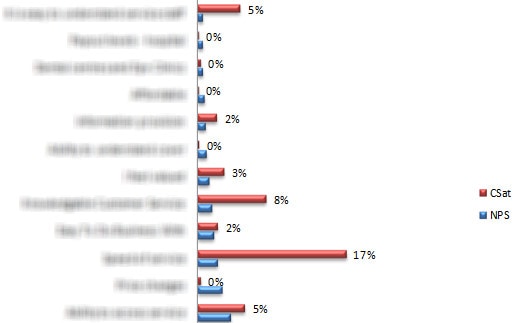

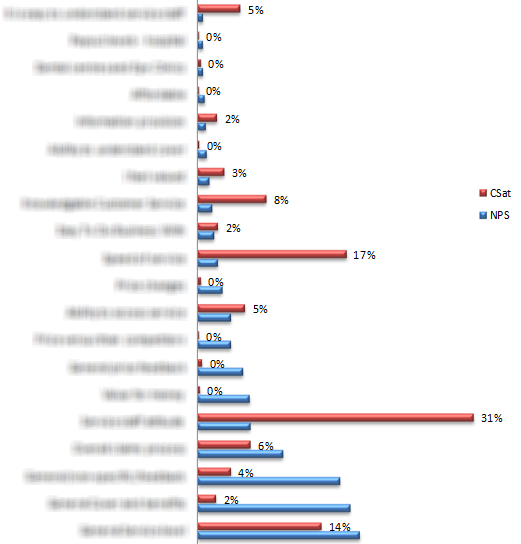

We used the same set of themes to code the data for both Customer Satisfaction and Would Recommend questions. The chart below looks at how often each of the themes was coded for each question.

In the coding process we only identified the theme of the comment, not whether it was positive or negative. So the volumes that you see in the chart are representative of the customer’s perceptions of the relevance of that theme to either the customer satisfaction (CSat) or “would recommend” (NPS) question. Each comment could be coded for more than one theme.

The first area to note is the substantially different theme coding rates for “would recommend” and CSat.

Very few themes are coded consistently between the two questions. You will also notice that the NPS themes are coded more evenly. The maximum coding rate for NPS is about 16% but for CSat it peaks at 31%.

Also, almost all of the themes are coded above the 1% rate for NPS while only 60% of the CSat theme are coded at the same rate. This implies that customers consider a wider array of elements of the overall offering when responding to the “would recommend” question than when they respond to the CSat question.

From this we can say the NPS question appears to be a more rounded review of the business.

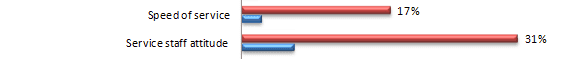

If we dive down into a couple of specific themes, this distinction becomes even more evident. For instance you can see that Speed of Service and Staff Attitude is top of mind for customers when considering CSat but not considered as often when answering the NPS question.

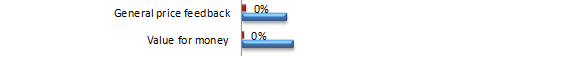

On the other hand, pricing attributes are rarely considered when the customer provides feedback on CSat but for NPS they are coded relatively often.

As I say this was an imperfect, but relatively unique, opportunity to compare the areas that customers consider when providing a “customer satisfaction” or “would recommend” score.

However, because it was not designed from the outset to test the different themes customers considered when scoring the two questions we must be careful not to overestimate the significance of these results.

For instance the order of the questions was always the same. This may lead to bias in the response. If you were to design this as an experiment from the ground up you would ensure that each question was shown first 50% of the time.

However, even allowing for its imperfect experimental design, it is a very interesting result.

Perhaps in these results we can see part of the reason that CSat is less good as an indicator of future business growth. Our hypothesis is that because customers refer to fewer themes in their responses to the CSat question than the NPS question, CSat focuses more explicitly on the immediate service experience.

Note though that while the immediate service experience may be more important for the CSat score, i.e. it may be skewed towards areas that are important for that experience, it may not cover all of those elements required to drive higher customer loyalty.

Potentially, customers consider a much wider range of areas in the total offering when answering the NPS question. Thus, the NPS question is a broader and more holistic response and better aligned to overall customer loyalty.

NPS Vs Customer Satisfaction FAQ

NPS is based on a person’s willingness to recommend your company to another person and is a good indicator of customer loyalty. Customer Satisfaction is based on a persons direct satisfaction with your company and is a less good indicator of customer loyalty.

No not directly: NPS measures a person’s wiliness to recommend your company, where as customer satisfaction how satisfied they are with your company. However, if customers are very satisficed with your company they are likely to also recommend it, i.e. there is a weak link between the two measures.

NPS customer satisfaction is not a customer experience term. NPS and Customer Satisfaction are distinct but different ideas in customer experience.

Last Updated: 29 June 2021