It’s almost as primal as the urge to survive. It’s the urge to compare your performance to others and corporate Net Promoter Scores® are not immune.

Comparing is good, great in fact, so long as you’re comparing apples with apples. The trouble is that when you have a standard like NPS® it might look like you’re comparing the same thing when really you’re not.

I have identified this issue in the past in several, blog, posts but I now have some more specific research on which to hang my hat. Sam Klaidman and Frederick C. Van Bennekom have examined the responses from some live survey data and written a very insightful paper called: “Survey Mode Impact Upon Responses and Net Promoter Scores” Not what you’d call a catchy title but it was peer reviewed and so has more credibility than most.

For all the details please read the paper (it is very good and complete) but if you’re in a hurry here are the handy dandy cliff notes.

Comparing Net Promoter Scores is Complex

This is a key finding from the paper:

Our research shows how the administration mode bias can greatly distort the so-called Net Promoter Scores within a company when mixed-mode surveying is conducted.

This says that you can’t compare scores generated using Telephone interviews and Internet Surveys. In fact you can’t even compare scores generated in mixed mode surveys (telephone and internet) where the ratio of different survey modes changes.

Why is it so?

At the core of the comparison issue is that net scores, like NPS, are more susceptible to the subtle, and not so subtle, influences in responses – they occur when the respondents complete the survey in different modes.

There are many of these skewing factors. For instance

past research has suggested that telephone surveys exhibit a response effect resulting from acquiescence, social desirability, and primary and recency effects.

People want to be agreeable when talking to people but less so when doing an internet survey so telephone survey results are higher.

And…

Past research has shown that telephone survey modes received higher scores than survey modes with a visual presentation of the scale

So the simple audio vs. visual presentation of the survey changes the score.

Also…

[in telephone surveys there are] Primary and recency effects result when respondents are drawn to the first or last response option presented to them

This drags the scoring to the ends of the scale: you get more 9s and 10s and 0’s and 1’s. This is particularly problematic in the net scoring approaches.

Implications

Comparing Scores With Consistent Administration Is Good

If you are running a Net Promoter, or similar customer feedback system, that data is useful (and trendable) as long as you are consistent in your administration of the survey process. If one month you use telephone surveys and the next internet survey, or perhaps change the mix of telephone to internet surveys that data are no longer comparable.

However if you are consistent in your administration you can trend away to your heart’s content.

So long as the data are being used only within an organization for problem identification and performance trending purposes, that is, examining changes in the perceptions from an organization’s stakeholder base over time for a given survey, these validity issues are not a serious concern.

Comparing Scores With Inconsistent Administration is Bad

What you need to take extreme care in doing is taking benchmark NPS figures from other organisations or through published reports and comparing your score to that score.

This is not to say that those published reports are not internally consistent. If performed properly they will be done using a consistent set of approaches, say all internet survey with randomised ordering.

However, comparing your telephone survey scores to the multi-company internet based survey score that the published report generates is not useful or valid.

By extrapolation, using NPS as a cross-company evaluative measure becomes highly problematic if the surveying practices are not identical, including the administrative mode.

How Does This Look in Practice?

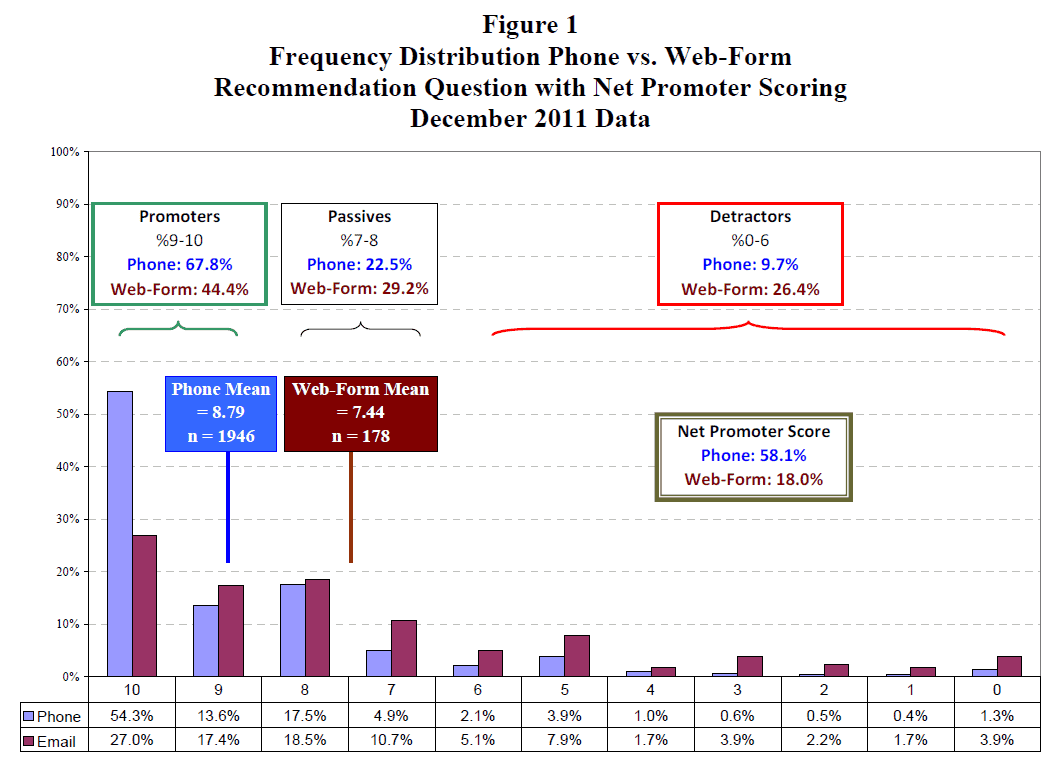

You can read the entire paper for the details of the process used and caveats identified. In summary, the authors were able to access a very useful set of data from an organisation that conducted, the same survey via different methods: telephone and internet. The allocation of respondents to each survey mode was relatively random.

In this way they were able to compare the results from each survey method and then calculate the NPS for each survey approach.

The results are quite startling – for the same customer group:

- NPS via Telephone:58

- NPS via web form: 18

That is a 40 point difference; a difference that would be cause for a great deal of investigation in most organisations but, as the authors have shown, is explained almost entirely through the survey administration mode.

Don’t Panic

Note that if you are using Net Promoter as part of an ongoing, consistent survey technique then you should care little about these results as you will be comparing apples with apples.

If on the other hand you are trying to benchmark yourself against third party reports or different surveys from different parts of your organisation you should take care that your apples don’t have a very orangey tang to them.

Get the Business Leader's Guide to Net Promoter Score Download Here